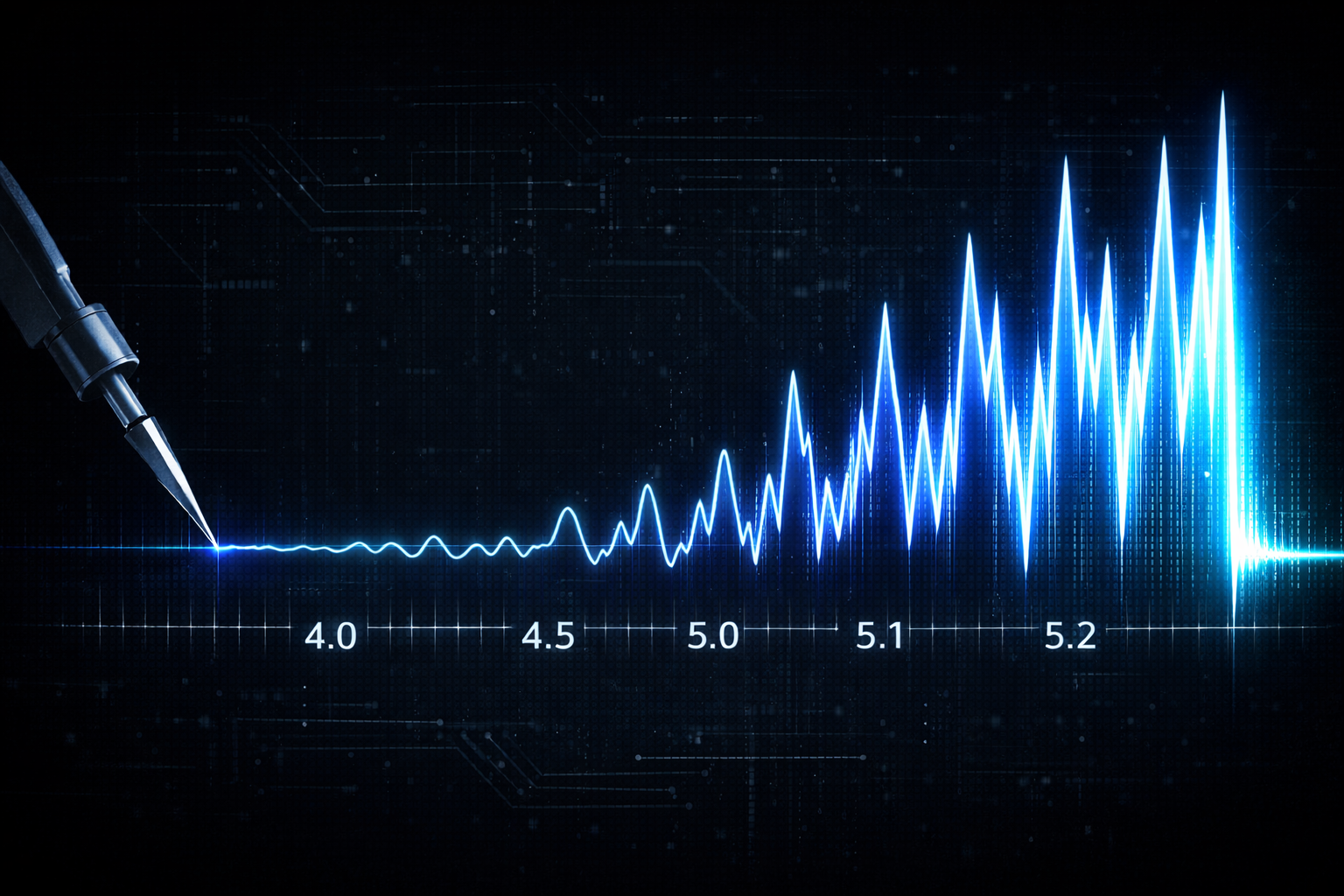

The Richter Scale of AI

When a 5.0 earthquake hits, it rattles windows. A 5.1 doesn't just rattle a little more. It releases significantly more energy. That's how logarithmic scales work. Small numbers, massive differences.

AI is following the same pattern.

Anthropic recently moved from Claude Opus 4.5 to Opus 4.6. A decimal point. On paper, it looks incremental. In practice, the leap in reasoning, contextual awareness, and agentic capability was anything but minor. And they're not alone. OpenAI's jump from GPT-4 to GPT-4o to o1 brought similar step-changes in capability. Google's Gemini models have followed the same trajectory. Meta's open-source LLaMA family keeps closing the gap with each release. Across the board, what looks like a version bump is often a generational shift in what these systems can actually do.

Here's why this matters for security and technology leaders:

The AI risk assessment you completed six months ago? It may already be outdated. The vendor questionnaire you sent last quarter about AI usage in your supply chain? The answers have likely changed. The policies you wrote to govern acceptable use of AI tools? They were written for a less capable technology.

We're not on a linear curve. We're on a logarithmic one. And just like seismologists learned to respect the difference between a 6.0 and a 7.0, technology leaders need to respect the difference between "that's a neat tool" and "that just replaced a workflow."

The organizations that treat AI governance as a one-time project will be the ones caught off guard. The ones that build adaptive frameworks, with regular reassessment cycles and flexible policies, will be the ones still standing when the next decimal point drops.

A small number on the scale. A seismic shift underneath.

#Cybersecurity #AI #ArtificialIntelligence #CISO #GRC #RiskManagement #vCISO